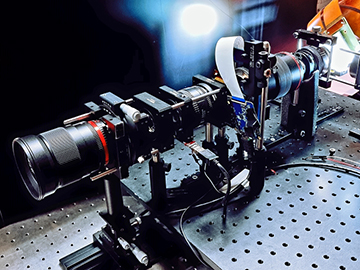

Using the optical setup shown here, researchers combined two different light-field display technologies to project large-scale 3D images with almost diffraction-limited resolution. [Image: Byoungho Lee, Seoul National University]

Researchers at a South Korean university have melded two scene-generating techniques—multifocal display and integral imaging—to create large-scale, ultrahigh-definition 3D pictures (Opt. Lett., doi:10.1364/OL.431156).

The combination all-optical technique, still in prototype form, avoids the digital-processing bottlenecks associated with existing light-field displays. It also permits 3D viewing without the need for special 3D glasses or goggles, a method known as autostereoscopy.

Light fields, heavy processing

Over the past decade, the strong interest in headwear-free 3D viewing has led to the development of light-field displays that modulate both the direction and intensity of light. However, because of the large number of pixels in a flat-panel display and the number of rays required to represent the pixels, the tomographic technique requires huge quantities of computational processing power—which goes against the end users’ desire for ever-larger displays.

A projection-based technique called integral imaging, first conceptualized in the early 1900s, reproduces a light field by using 2D arrays of microlenses to capture a scene in the form of an “elemental image” and reproduce the scene. The method can enlarge images better than standalone multifocal displays, but at the cost of image quality. The technique also contains a large amount of redundant data, making it inefficient.

Two methods, better than one

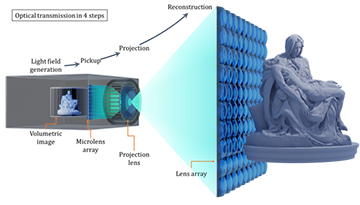

The new display optically transforms the object generated from the multifocal display into the projection volume for integral imaging by automatically mapping the rays through a microlens array (optical pickup). The transformed information can be enlarged to the large screen through a projection lens. After the projection, the object display volume is reconstructed, passing through another lens array in a similar manner to the existing integral imaging system. [Image: Byoungho Lee, Seoul National University] [Enlarge image]

A team led by OSA Fellow Byoungho Lee, an engineering professor at Seoul National University, Republic of Korea, developed a prototype device bringing together the best of both worlds. The light path of the optical structure incorporates a backlight, relay lenses on either side of a focus-tunable lens, the image plane, a microlens array, the projection lens, a macrolens array and the reconstruction plane.

The researchers tested their optical setup with volumetric “stock images” of a market scene, a famous statue by Michelangelo, a set of concentric circles and a Siemens starburst target. Their reconstructed 3D image measured 21.4×21.4×32 cm, or the equivalent of 28.6 megapixels – some 36 times higher resolution than the original image.

Lee and his colleagues realize that more work needs to be done before the optical system is ready for commercial use. First, he says, researchers will need to reduce the complexity of the multifocal display so it can fit into a compact projector. Second, the lens array and focus-tunable lens will need to be optimized to reduce aberrations. Lee is optimistic that commercially available hardware for this type of 3D projection could be possible within two or three years.