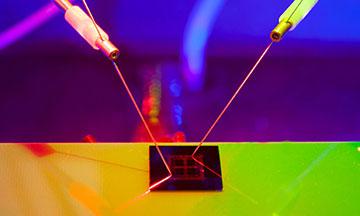

The prototype technology brings together imaging, processing, machine learning and memory in one electronic chip, powered by light. [Image: RMIT University]

If scientists could train artificial intelligence (AI) systems to remember the images they capture from their photodetectors and learn from them—all in one package—it would be one step closer to an artificial brain. But the huge data sets and computer power required to make sense of them usually require offloading images elsewhere for processing—unlike a natural brain.

Now, researchers in Australia have developed a neuromorphic imaging chip that performs image pre-processing and recognition by itself (Adv. Mater., doi: 10.1002/adma.202004207). The optically driven chip, made of two-dimensional black phosphorus, demonstrates a way to combine AI software and imaging hardware in a brain-like package that could run autonomously.

An interdisciplinary field

The effort by the team at RMIT University in Melbourne, Australia, is part of a broader, interdisciplinary field called neuromorphic computing or neuromorphic engineering, which seeks to build electronic systems that process real-time visual and auditory signals like the human brain. First conceived in the 1980s, neuromorphic systems have typically harnessed back-end supercomputers, which don’t fit into self-contained robots and consume lots of power. More recent efforts to build neuromorphic chip systems blend optical and electronic technologies.

“In our work, we have adopted a unique path of engineering materials to utilize light for neuromorphic computation, artificial intelligence and machine vision,” says Taimur Ahmed, a researcher in RMIT’s Micro Nano Research Facility and one of the lead authors. “Using light signals for computation offers several advantages over the traditional electronic signals, such as high processing speed and low power consumption. Our prototype devices allow in-pixel image pre-processing and neuromorphic computation.”

The special ingredient

A graphic illustration showing how the technology combines the core software needed to drive AI with image-capturing hardware, in a single electronic device. [Image: RMIT University]

To build their chip, the researchers fabricated thin, vertically-stacked layers of black phosphorus, the most thermodynamically stable allotrope of that element. Inducing oxidation defects in the 2D sheets makes the black phosphorus generate current when exposed to ultraviolet light. According to Ahmed, the three years of experimental work to develop the wavelength-selective photoresponse of the black phosphorus flakes was the most challenging part of the study.

RMIT’s proof-of-concept chip consisted of a 2×2 pixel array of oxidized black phosphorus with gold-chromium electrodes and a substrate made from silicon and silicon dioxide. Pulses of 280-nm-wavelength light triggered the pixels to “write,” while pulses of 365-nm-wavelength light triggered an “erase” function and reset a pixel’s memory. Varying the pulse repetition rates of the triggering beams affected the chip’s short-term and long-term memories, a measure of what AI researchers call synaptic plasticity. Although this prototype chip responded to UV light, Ahmed suggests that black phosphorus could be further modified to work at visible frequencies.

A 4-pixel camera is hardly “image forming,” but the RMIT researchers ran computer simulations that scaled up the technology to a 28×28 pixel image that would train the chip’s neural network to recognize a standard photograph. Ahmed says that the technology could be built out to 28×28 or larger arrays of pixels.

“We are working on further extending this research to develop an AI chip which is fully unsupervised, learns from its environment and makes decisions in real time,” Ahmed adds.

Researchers from Colorado State University, USA, Northeast Normal University, China, and University of California Berkeley, USA, also participated in the study.