Feature

Lidar in Space: From Apollo to the 21st Century

Over the last four decades, spaceborne lidar instruments have evolved beyond their original application—altimetry—to tracking glacier melting, gauging wind speeds and spotting snow on Mars.

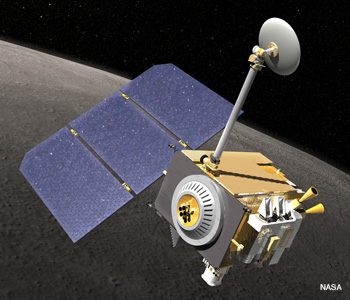

Artist’s conception of the Lunar Reconnaissance Orbiter surveying the Moon.

Artist’s conception of the Lunar Reconnaissance Orbiter surveying the Moon.

When laser-ranging technology was first developed in the 1960s, no one could have envisioned that it would become a key tool for exploring Earth and its neighboring planets from space. From hundreds of kilometers away, lidar systems can give scientists a broad overview of features that would have escaped notice from lower altitudes.

…Log in or become a member to view the full text of this article.

This article may be available for purchase via the search at Optica Publishing Group.

Optica Members get the full text of Optics & Photonics News, plus a variety of other member benefits.