Feature

A Pre-History of Computer-Generated Holography

This month’s edition of the journal Applied Optics is a special issue devoted to the contributions of the late Emmett Leith. As a tribute to his dear friend, the author discusses the significance of Emmett’s work and his own role in inventing computer-generated holography.

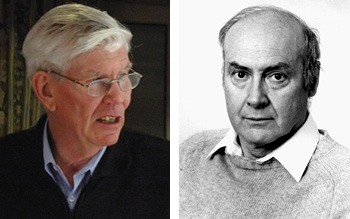

Adolf Lohmann Emmett Leith

Adolf Lohmann Emmett Leith

In the 60 years since Gabor’s invention, holography has become much more than a way to capture and visualize 3D information. The tools of holography have been applied to many fields of optics and photonics, including optical data and image processing, invariant pattern recognition, optical fuzzy logic control and super resolution and imaging.

…Log in or become a member to view the full text of this article.

This article may be available for purchase via the search at Optica Publishing Group.

Optica Members get the full text of Optics & Photonics News, plus a variety of other member benefits.